Engineering’s Quiet Crisis: Why the Biggest Bottleneck in Product Development Is About to Break

Modern products are more complex than ever, but the way they get built has barely changed. This piece looks at the structural pressures forcing that to change, how AI is reshaping both simulation and workflow automation, and why we think engineering software is one of the more compelling areas in climate-adjacent technology.

Three forces are converging on engineering teams simultaneously, and none of them are cyclical.

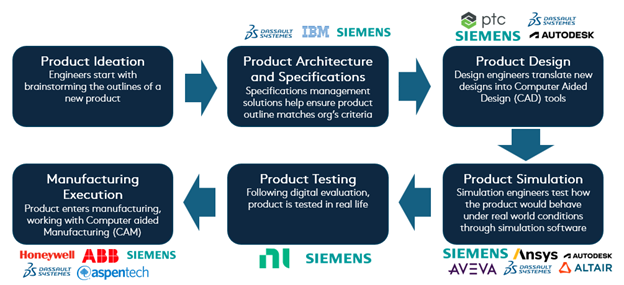

The first is speed. Product development cycles are compressing across every industrial sector. OEMs that once had five years to bring a new platform to market are now working in three. Automotive programmes that ran sequentially, design, then simulation, then manufacturing validation, are being pushed to run concurrently. The pressure is not to do things slightly faster. It is to fundamentally restructure how engineering work flows through an organisation.

The second is cost. Physical prototyping remains one of the most expensive stages of product development. A single prototype iteration for an automotive component can run into six figures, with lead times stretching weeks or months. Every additional physical test that could have been replaced by simulation is margin left on the table.

The third is talent. Engineering teams are losing experienced people faster than they can replace them. This is distinct from the broader manufacturing skills gap. The issue is specifically in R&D: senior engineers with decades of domain expertise retiring or moving on, taking institutional knowledge with them. The people who know which simulation setups actually converge, which parameter ranges work for a given material, and which compliance shortcuts save three weeks. That knowledge was never in the system. It was in their heads.

These pressures are not new individually. What is new is their simultaneity, and the fact that a generation of software tools is emerging that can actually address them.

The tool landscape nobody designed

The average engineering team works across ten to twenty specialised software tools: CAD for geometry; CAE for simulation; PLM for lifecycle management; plus FEA, CFD, meshing, topology optimisation, cost estimation, compliance checking, and manufacturing planning. Each one is powerful in isolation. Together, they form an ecosystem that was never designed to work as one.

Manual handoffs between tools introduce errors and delay. An engineer designs a component in one environment, exports it for simulation in another, waits for results, transfers findings back, updates the lifecycle management system, and repeats. Every handoff is a potential failure point.

But the deeper problem is not the five minutes lost transferring a file. It is the weeks-long feedback loop between departments. A geometry change in design invalidates a simulation run. That delays a manufacturing feasibility check, which pushes back a compliance review. The iterative back-and-forth between design, simulation, manufacturing engineering, and regulatory teams is where the real time disappears. Tighter integration is not just about connecting data; it is about compressing that cross-functional cycle from weeks to hours and enabling genuine collaboration at scale.

The major vendors, Siemens, Dassault Systèmes, PTC, Autodesk, have tried to address this by building broader platforms. Siemens’ Xcelerator portfolio, for example, spans CAD, simulation, IoT, and manufacturing execution. But these suites still struggle with multi-vendor environments. No OEM runs a single vendor’s tools end to end. The fragmentation persists because the problem is not that any one tool is inadequate; it is the gaps between them.

AI is changing what is possible

The impact of AI on engineering is playing out across two fronts simultaneously.

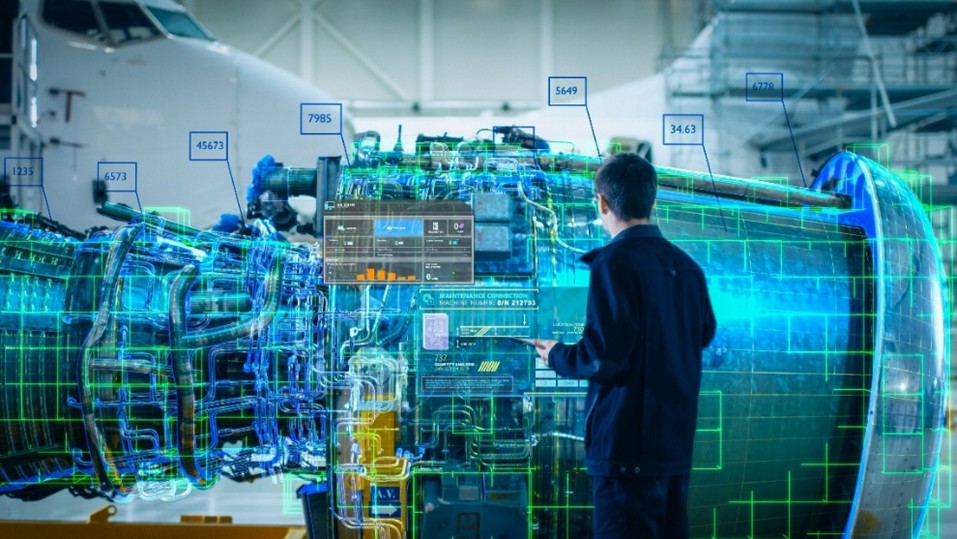

The first is simulation. Traditional simulation workflows require deep specialist expertise, expensive on-premise infrastructure, and solve times that can stretch from hours to days. A new generation of cloud-native simulation platforms is changing that equation by making CFD and FEA accessible to a broader set of engineers, not just dedicated analysts. AI is accelerating this further through surrogate models and physics-informed machine learning that can approximate complex simulations in near-real-time, turning what used to be overnight batch jobs into interactive design feedback. This does not replace high-fidelity simulation for final validation. But it opens up the early design phase to far more exploration than was previously practical.

The second is workflow automation and orchestration. The first wave here was copilot-driven: tools that sit alongside the engineer, offering suggestions or accelerating specific tasks within a single application. Useful, but insufficient. A copilot still requires an engineer to orchestrate the overall process, manually moving outputs between tools. For a single design iteration, the overhead is manageable. For the kind of rapid, multi-variant exploration that modern product development demands, it becomes the binding constraint. The emerging shift is toward AI agents that execute multi-step workflows autonomously: running a design through simulation, checking results against compliance thresholds, iterating on geometry, generating reports, and flagging exceptions for human review.

”From an average four weeks of lead time, we are now at hours. And it’s not just reducing the lead time, we investigate 300 different designs in parallel where before we had one design from an engineer, sent it, and got it back.”

(Cost & Value Engineering Director from major aerospace corporation)

The critical distinction across both fronts is auditability. Engineering does not tolerate black boxes. When a structural component goes into an aircraft, the simulation that validated it needs to be traceable, reproducible, and defensible. The most compelling approaches are not replacing engineering judgment with neural networks; they are using AI to orchestrate known, validated processes with a speed and consistency that humans cannot match manually.

Letting engineers fix their own problems

Historically, automating an engineering workflow required software development skills. The engineer who spends three hours every week manually transferring simulation results into a reporting template knows exactly what needs to be automated. But building that automation typically requires filing a request with IT, waiting in a development queue, and hoping the resulting tool actually reflects the workflow as it exists in practice.

This is the same dynamic that played out in business operations a decade ago. The people closest to the inefficiency were the least equipped to fix it. Low-code and visual programming platforms changed that equation by letting domain experts build automations themselves.

The same principle is now reaching engineering. Visual workflow builders allow engineers to connect their design, simulation, and lifecycle management tools into automated pipelines without writing code. Adoption follows the same bottoms-up pattern seen across enterprise software: starts with one workflow, one team, and expands as others see the time savings. This changes who owns the automation, and shifts the economics from large top-down IT projects to incremental, team-level improvements that compound over time.

Knowledge that survives

Perhaps the most underappreciated consequence of digitizing engineering workflows is what happens to institutional knowledge.

In most engineering organisations, the way things actually get done exists in two places: the official process documentation, and the heads of the people who have been doing it for twenty years. The official documentation is almost always incomplete. The real knowledge, the specific parameter ranges that work, the simulation setup that avoids convergence issues, the order of operations that saves three hours on a compliance check, lives in muscle memory and informal mentorship.

When an engineer builds a digital workflow, they encode that expertise into a reusable, shareable, auditable asset. The logic is no longer implicit. It can be versioned, improved, and deployed across projects, teams, and geographies. For OEMs managing thousands of variants across multiple platforms, this is not a convenience. It is a competitive necessity.

The companies that systematise their engineering knowledge will iterate faster, onboard new engineers more quickly, and maintain consistency across distributed teams. The ones that do not will keep losing ground every time someone walks out the door.

Design better, not just manufacture better

There is a specific climate thesis here that is worth stating directly: the biggest opportunity to reduce a product’s environmental footprint sits at the design stage, not downstream in manufacturing or logistics. Commonly cited sustainability frameworks (including work associated with the EU Ecodesign Directive) argue that a large majority of a product’s lifetime environmental impact is determined by decisions made during design. Material selection, geometry, weight, manufacturing method, and end-of-life recyclability are all largely locked in before a single part is produced.

This reframes the sustainability argument. It is not about optimising factories. It is about giving engineers the tools and the time to explore better design choices. Faster simulation means more alternatives evaluated. Automated topology optimisation means lighter components across the entire product, not just the high-value ones. Digital testing of sustainable materials that would be prohibitively slow to validate physically becomes feasible. The arithmetic is straightforward. Engineering teams with more capacity for exploration will produce greener products. The bottleneck has never been willingness. It has been capacity.

Where this leaves us

Engineering software is entering a period of meaningful structural change. The pressures, compressed cycles, rising prototyping costs, leaking talent, are not going away. And the enabling technologies, cloud-native simulation, AI-driven orchestration, low-code automation, are reaching the maturity required for enterprise adoption.

At Blume, our portfolio exposure across industrial and energy sectors gives us a grounded view of what engineering teams actually need, informed by relationships with the companies navigating these challenges daily. We see this space as a natural extension of our broader thesis: the most impactful climate technologies are often the ones that improve how industries fundamentally operate. Engineering sits at the very start of that chain. Better tools at the design stage cascade through everything that follows.